Hacking tractors

Hacking tractors. Yeah, sure… Wait, what?

Just a decade ago you would not consider a vehicle manufacturer to be a software development company. By today however, we have millions of lines of source code running in our cars. As an obvious consequence of this, automotive security has gained more and more attention in the last years. But up to now this was mainly affecting passenger vehicles.

But neither manufacturers of agriculture machinery could avoid their fate. Tractor companies – like the American icon John Deere – evolved to being tech companies by today. And many, if not all of them are just learning the cyber security lesson the hard way.

One of the first stories where the words ‘tractors’ and ‘hacking’ got together was related to maintenance. John Deere tried to put legal and technical protection on all of their parts in a tractor (as a kind of Digital Rights Management protection), basically putting these parts to the Digital Millennium Copyright Act takedown list. As a consequence of this, only their personnel could legally do any maintenance of their tractors. The rest of the story is game theory: farmers were fighting for their “right to repair“, and started having the firmware of their tractors updated to circumvent this protection and maybe even unlock some features. Which was basically hacking; and that is illegal.

But in 2015 a group of farmers and digital rights experts applied for an exemptions of vehicle software from DMCA. And they have won the case, allowing owners to repair their vehicles. Finally in 2018 this had been extended to 3rd parties, now allowing anyone to access and modify the software of the vehicles, paving the way for even bug hunting to be done in this domain.

Thanks to this, research groups can now do research on these vehicles as well. For instance, the Tractor Hacking team at the California Polytechnic State University. The team runs a project that is focusing on reverse engineering the CAN bus of John Deere tractors. They want to understand, and thus make it easier to interfere with its electronic system.

But this story is not specifically about hacking the tractors themselves.

It’s about the complex IT infrastructure that these vehicles connect to.

The overture – early tractor hacking works

Earlier this year, a group of ethical hackers presented two vulnerabilities in the https://myjohndeere.deere.com/ website, allowing unauthorized access to sensitive data of John Deere customers, including owners’ names and addresses, as well as the vehicle they own.

The idea of ‘hacking tractors’, i.e. to take a look at the website from a security perspective came a few months earlier, when the researcher working under the name Sick Codes realized that the vehicles are sending and receiving files from a server when working out in the fields. Things like this always attract ethical hackers. With the intention to report any finding back to John Deere, he signed up for a free developer account.

Unlimited usernames

He had found the first vulnerability allowing enumeration off all possible usernames on the site even before he logged in.

When typing in the username, the website did a look-up of existing usernames after each addition of a new character. This behavior suggested two things. First, that there was no rate limit, so one could call this REST API unlimited times to enumerate all possible accounts . Second, that this lookup happened in an unauthenticated way, and the API is open to the public. Remember, the look-up happened before the user logged in; actually, during the login process itself!

To see if this is really true, the researcher sniffed the HTTP request that called the look-up API and used curl to send these requests automatically. After some trials he ended up with the minimum set of cookies with which the API still responded, but which allowed sending username availability requests without any rate limits.

As a proof-of-concept, he took the list of Fortune 1000 companies and tried to enroll with their names to check which account names were already active. 192 out of the 1000 company names (around 20%!) returned ‘This username is already taken‘, revealing a valid existing user. The fact that the check was case insensitive also helped.

After reporting this to John Deere (which is an interesting story on its own), the issue has been fixed by introducing a limit of 5 look-ups per 10 seconds.

Sensitive information exposure

The first issue indicated that there should be more than that. The second found problem was related to another API that was revealing sensitive personal information of all John Deere equipment owners without any authorization.

After doing some experiments the ethical hacker ended up at the page https://terminals.deere.com/. The API behind it was a look-up for Vehicle Identification Numbers (VINs) and some other inputs, like the serial number of equipment. Any authenticated user (for instance a developer account) could access this interface. It however lacked authorization. Anyone could access all equipment owners’ personal data, which is clearly a horizontal authorization problem, commonly referred to as ‘Authorization bypass through user-controlled keys’.

As a proof-of-concept, the researcher used a VIN number found on the Internet. The API returned the owner’s personal details, such as the name and the address, or the equipment’s permanent ID and its status. But there was still a suspicion that this was ‘just’ a retailer. So, he gathered some 150 other VIN numbers from a public auction site, and all of them worked. This confirmed that the exposed data actually referred to real end users.

John Deere fixed the issue by implementing a proper horizontal authorization between the users.

However, the above two vulnerabilities were only the overture. In one of the last entries in the Comments section of the original article the Admin stated: ‘We have more to come 🙂‘

The main parts – a baroquish movement of vulnerabilities

A group of researchers (including Sick Codes) continued hacking tractors. Later this year – in August 2021 – they published a set of vulnerabilities they had found in addition at two equipment vendors: 11 in John Deere and 3 in CNH Industrial sites. They presented many of these on the famous Def Con 29 conference under the title “The Agricultural Data Arms Race Exploiting a Tractor Load of Vulns“. You can find the presentation here.

In general, these vulnerabilities allowed discovering sensitive personal details of tractor and other equipment owners. Who they are. Where they are. How old they are. For how long they are owning the equipment in question. And many more.

Let’s see these vulnerabilities one by one. We’ll map them to the bug categories from the OWASP Top 10 for reference. Needless to say, both John Deere and CNH Industrial fixed these already (at least those in the customer facing pages). In doing this, they got assistance from the aforementioned ethical hacking group and from the Industrial Control Systems CERT.

Injections

Injection flaws are A03 on the OWASP Top Ten 2021 but have been on the very top of the list for more than a decade. There were three types of injections found in John Deere sites. The (in)famous SQL injection, Cross-site Scripting (XSS) and HTTP request smuggling.

SQL injection

There were two SQL injection vulnerabilities in John Deere sites. One in MachineBook page used for on-site demonstration, and the other in Supplier Invoice Portal.

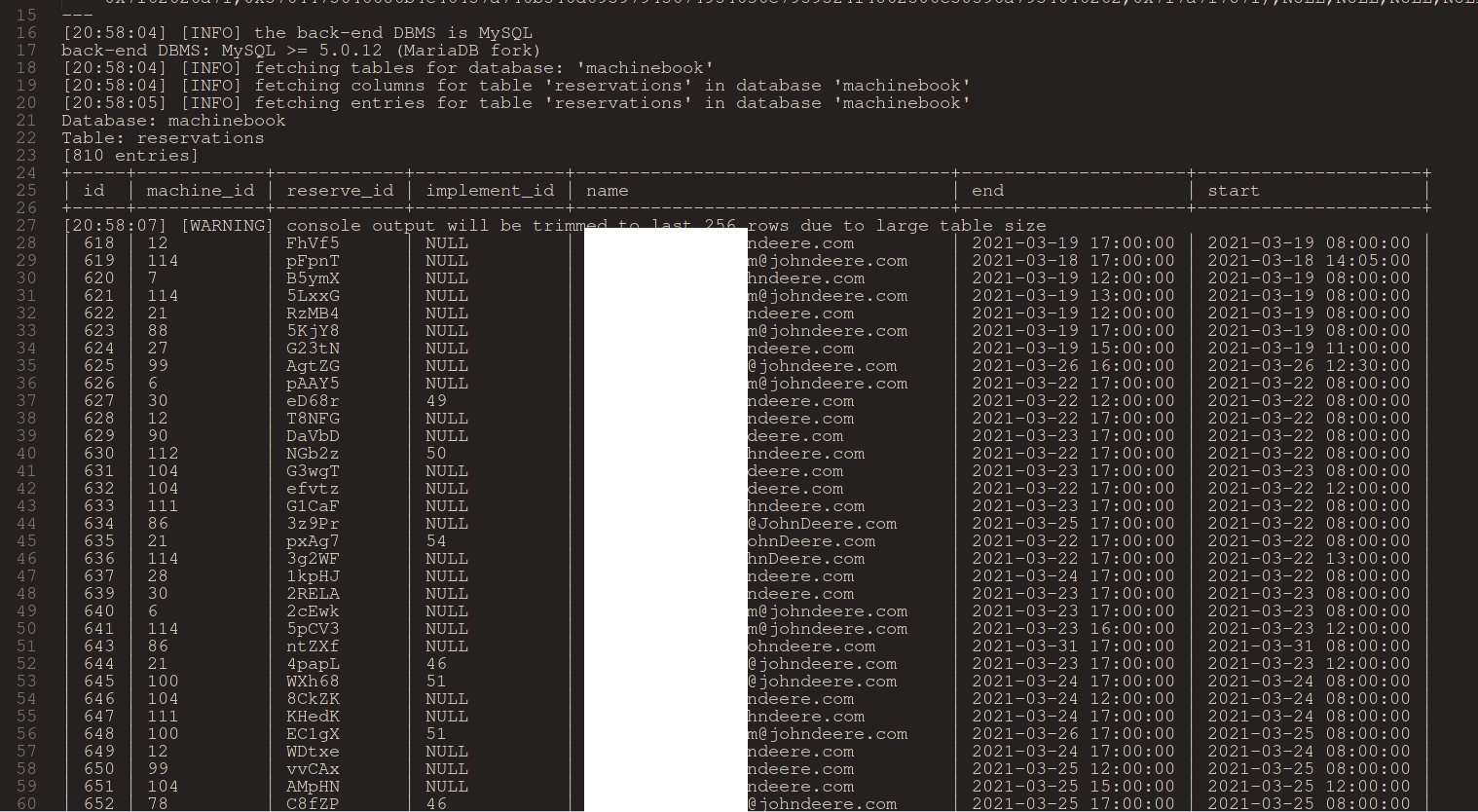

The eqid parameter of the Machinebook page (https://machinebook.deere.com/nelson/machinebook/machine_list.php) appeared to be vulnerable to time-based blind SQL injection. This is a variant of the SQL injection attack where the attack does not reveal the data directly because the injectable statement is not a SELECT that dumps data, but rather an INSERT or an UPDATE or similar, which typically does not return any records, just modifies data in the database tables. In this case the attacker can still reveal the desired information (i.e. the full content of database tables) character by character via having an oracle, an indication whether they’ve hit the right character in a field of a record in the database or not. This technique allowed a remote unauthenticated user to gain access to the internal database containing for instance employee data.

The other SQL injection was in the Supplier Invoice Purchase Order Servlet page. The specific injection field was the purchase order itself (the poList parameter). One example attack string was:

https://invoice.deere.com/invoice/servlet/

com.deere.u90242.invoice.view.servlets.SelectPOServlet?

userAction=searchUnitsByPo&

poList=1%27)%20UNION%20SELECT%20*%20FROM%20DJDBP01.SM_IND_ORD_HDR%20–

This was not a valid attack payload though (most probably due to using SELECT *), but the returned error page indicated that the SQL statement failed, and that was a clear indication of a successful injection.

Cross-site scripting

There were several XSS vulnerabilities in John Deere pages, all DOM-based (client-side). Without any further explanations, we’ll just enlist below the vulnerable pages together with the proof-of-concept URLs, emphasizing the payload (value of which is basically the URL encoded “><script>alert(1)</script> string):

- Reset password (/forgetUser page, TARGET parameter):

https://myjohndeere.deere.com/wps/portal/myjd/forgetUser?

TARGET=%22%3E%3Cscript%3Ealert%281%29%3C%2Fscript%3E - Registration (/registration page, SRC parameter)

https://myjohndeere.deere.com/wps/portal/myjd/registration?

SRC=%22%3E%3Cscript%3Ealert%281%29%3C%2Fscript%3E

&TARGET=%22%3E%3Cscript%3Ealert%281%29%3C%2Fscript%3E

&requestFlow=%22%3E%3Cscript%3Ealert%281%29%3C%2Fscript%3E - Registration servlet. Specifically, the EM_MandatoryPhone parameter appeared to be vulnerable, but note that the URL below contains the same payload for all parameters (we are not indicating all possible parameters):

https://registration.deere.com/servlet/

com.deere.u90950.webregistration.view.servlets.StandardRegistrationAddServlet?

EM_MandatoryPhone=%22%3E%3Cscript%3Ealert%281%29%3C%2Fscript%3E

&EM_SelectAppname=%22%3E%3Cscript%3Ealert%281%29%3C%2Fscript%3E

&EM_SelectColor=… - Registration, sign-in (/SignInServlet, various parameters, just two indicated below):

https://registration.deere.com/SignInServlet?

EM_FORGOT=%22%3E%3Cscript%3Ealert%281%29%3C%2Fscript%3E

&EM_REG=… - Supplier Invoice Login Servlet of the Supplier Invoice Portal (entering an email address, and providing “><script>alert(1);</script> in the subsequent field):

https://invoice.deere.com/invoice/servlet/com.deere.u90242.invoice.view.servlets.EmailLoginServlet - Supplier Invoice Purchase Order Servlet of the Supplier Invoice Portal (same attack payload as above):

https://invoice.deere.com/invoice/servlet/com.deere.u90242.invoice.view.servlets.SelectPOServlet#

HTTP request smuggling

One of the found problems was an HTTP Request Smuggling (CWE-444) that allowed an unauthenticated user to bypass front-end security controls in qual.contest.deere.com, adds-eu.deere.com and admin.qual.contest.deere.com.

Request smuggling (aka request splitting) is a kind of injection where the attacker manipulates the HTTP request by putting into the header some maliciously-formed data. Newlines in this user-controlled data will split the request to two, and will basically create an additional faked HTTP request on top of the original one. The trick is in the fact that a firewall or intrusion detection (see A09 Security Logging and Monitoring Failures) may interpret it as a single request, while an intermediary (such as a proxy or a load balancer) will actually forward two HTTP requests towards the back-end code – one genuine, and the other one initiated by the attacker in this tricky way.

Some more information exposure

We have already seen some very bad information exposure examples in the tractor hacking overture. The following vulnerabilities are further such mistakes, both in John Deere and CNH Industrial sites. OWASP enlists these in A02 Cryptography failures, specifically under the sub-category of information leakage. Some of these issues are also authorization issues, which resides in A01 Broken access control in OWASP Top 10 2021.

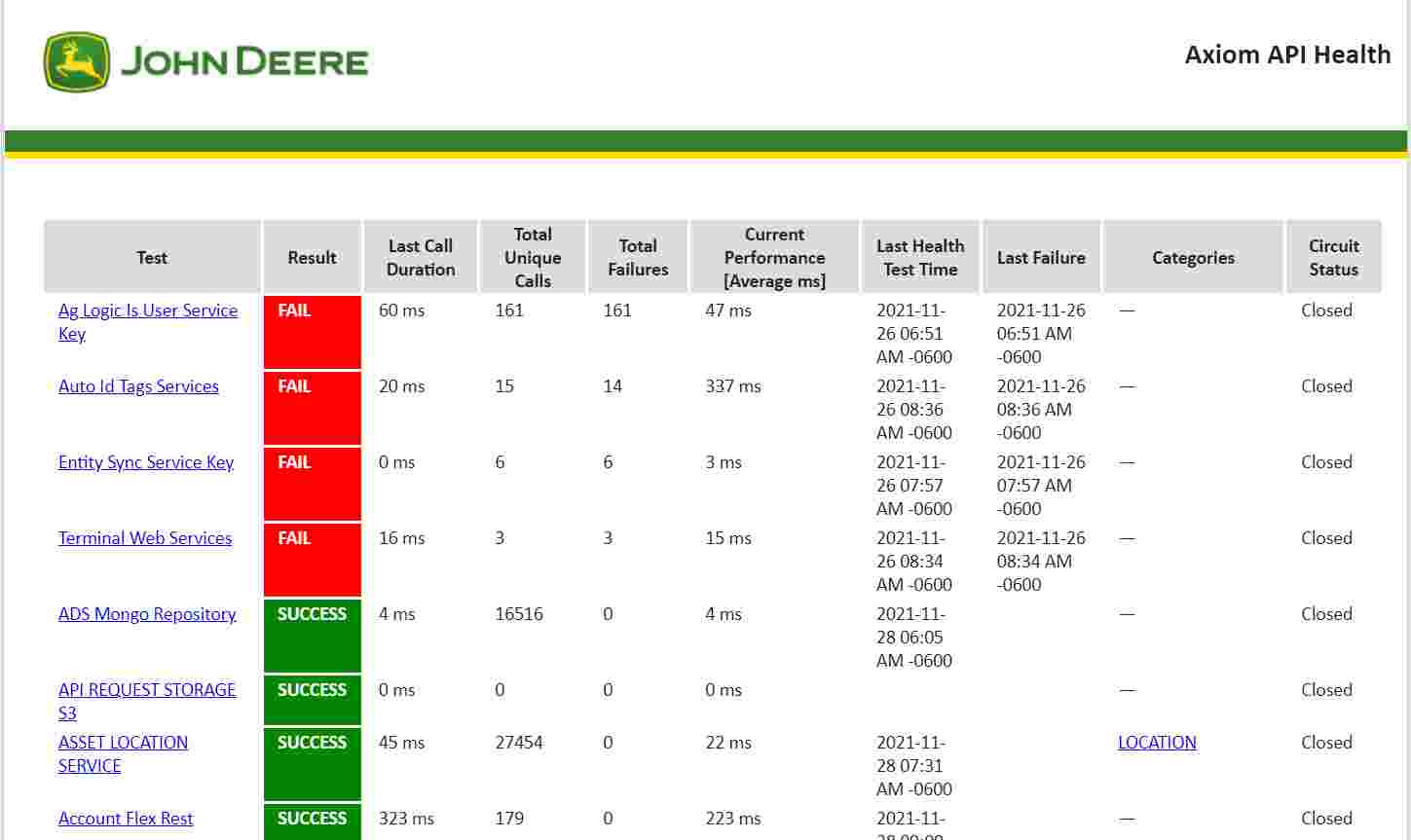

The first vulnerability is a pretty trivial case. The Axiom API in the John Deere site exposes a health page publicly, which contains mission-critical health data related to services and assets within *.deere.com subdomains. Making it public may have been intentional, and this may also be the reason behind the fact that the page is still publicly available (as of November 2021).

But while some of these service-related data may be an important and valuable information to some users, exposing it publicly may help attackers in hacking tractors: when and how to craft a specific type of attack. Of course, it would have not helped if they limit it to enrolled users only, since anyone can register in the system. What would help is to distinguish between the various services, and reveal only those that are absolutely necessary, and hide those that may be sensitive. This basically means to apply the principle of least privilege (discussed as part of A04 Insecure Design on the OWASP Top Ten).

The other information exposure problem was in a CNH Industrial page, which exposed the JavaMelody monitoring server publicly. On this page one could see session data of all current website users, including the authentication cookies! This alone poses an extremely high risk, because it allows anyone to impersonate an existing user by stealing the authentication cookie and do spoofing by session hijacking. Clearly an Identification and authentication failure (A07 on the OWASP Top 10 list). But the page also exposed some other sensitive information, like full name of users, their IP addresses and locations, user IDs, HTML code of the currently visited pages, etc.

Finally, in the EU API Dev Portal WebApplication of CNH Industrial, if one knew an existing user’s email address, they could get all kinds of sensitive information about that user, such as user id, username, permissions, roles, and full name. The URL euapidevportpwbapp02.azurewebsites.net/api/auth?email=… was publicly accessible and responded with all of the above data in a JSON structure.

Using vulnerable and outdated components

In case of A06 Vulnerable and Outdated Components, it’s all about introducing a vulnerability into your application via vulnerabilities of your dependencies. In this sense, ‘it’s not your fault’, but definitely ‘is your problem’. So, the only mistake the developers did in this case is not to upgrade the used packages to the latest versions, in which these vulnerabilities had been already fixed.

One of these problems was a misconfiguration problem in the Pega Chat platform (versions 7.4.0 through 8.5.x), enlisted in the Common Vulnerabilities and Exposures database under CVE-2021-27653. John Deere didn’t change the default configuration of Pega on their kms.deere.com site that uses this package. The default value allowed access to mission critical data, similarly to the Axiom-related problem we discussed in the previous section; see more details on this vulnerability on the vendor’s website. The CVE entry had been published yet in February 2021, so there was definitely enough time to upgrade; which they did not do.

The research group had found a vulnerable dependency in another John Deere site as well, namely in the Supplier pages (supportportaldevr14.deere.com/axis). The vulnerability in questions was CVE-2019-0227, and was related to Apache Axis (v1.4), allowing remote code execution (RCE) via a Server-side Request Forgery (SSRF is A10 on the OWASP Top 10 list). Note that this vulnerability has been introduced into Axis version 1.4 yet in 2006. That was actually the last release of Apache Axis, being replaced with Axis2 around that time. And the vulnerability is known ever since 2019! So, using such an old version is a serious fault.

And the rest

Finally, an additional vulnerability was found in a CNH Industrial site, exposing an API through which one could manipulate internal data queues on the server. This falls into OWASP Top 10 category A08 Software and data integrity failures.

The site exposed the Sidekiq Server, and its data synchronization queues at https://be-staging-nzqq.cnhindustrial.com/sidekiq/queues (it does not do it anymore, of course). This allowed manipulating the data queues publicly.

Hacking tractors – the epilog

Lessons learnt?

First of all, many companies do no consider themselves to be software development companies, even though they actually are. This is unfortunately pretty common for various vendors of network components and IoT devices. As well as for heavy machinery manufacturers, obviously. Their mindset is still in the hardware domain, even though most of the services they provide are heavily software-based already.

The car industry already realized this and is adding security to their conventional safety-first approach. Vendors of agricultural machinery are obviously lagging behind and are still learning the lesson. Moreover, they seem to be learning it the hard way.

Related to the above discussed, researchers warned that these vulnerabilities can easily jeopardize food security supply chain in the US (or elsewhere). This is of course disputable, at least for vulnerabilities breaking confidentiality (such as sensitive information disclosure). But the risk of hacking tractors is more obvious in case of an attack targeting availability or integrity of these services. For instance, by getting all John Deere tractors out of business for some time. If something like that would happen at the ‘right’ time (say, in times of planting or harvesting), the consequences may be devastating, comparable to a worst natural disaster. That could indeed distract the food supply chain very badly!

Finally, note that in this article we touched literally all ten elements from the OWASP Top Ten 2021! The research results presented on Def Con are a tangible example on what can happen if one is not aware and/or does not follow the secure coding best practices. Luckily for both John Deere and CNH Industrial, this time it was a group of ethical hackers who revealed and reported these problems to them first. Otherwise, the vulnerabilities could have easily caused a disaster if were exploited by the bad guys.

Time-based blind SQL injection, Server-side Request Forgery (SSRF), information exposure, access control weaknesses, cryptography failures, Cross-site Scripting (XSS), HTML request smuggling… Do they sound like rocket science? They are actually simple things once you understand them. You can learn about them and many other software security issues on our courses. Most of these vulnerabilities are easy to prevent – you just have to know the best practices. And you’ll get exactly that from us!